🔐 AI Security in 2025: Understanding the Shared Responsibility Model

Why Every Enterprise Must Rethink How It Secures AI Across SaaS, PaaS, IaaS, and On-Prem

Artificial Intelligence has moved from experimentation to enterprise-wide adoption, but one critical area still remains misunderstood:

👉 Who is responsible for securing AI systems?

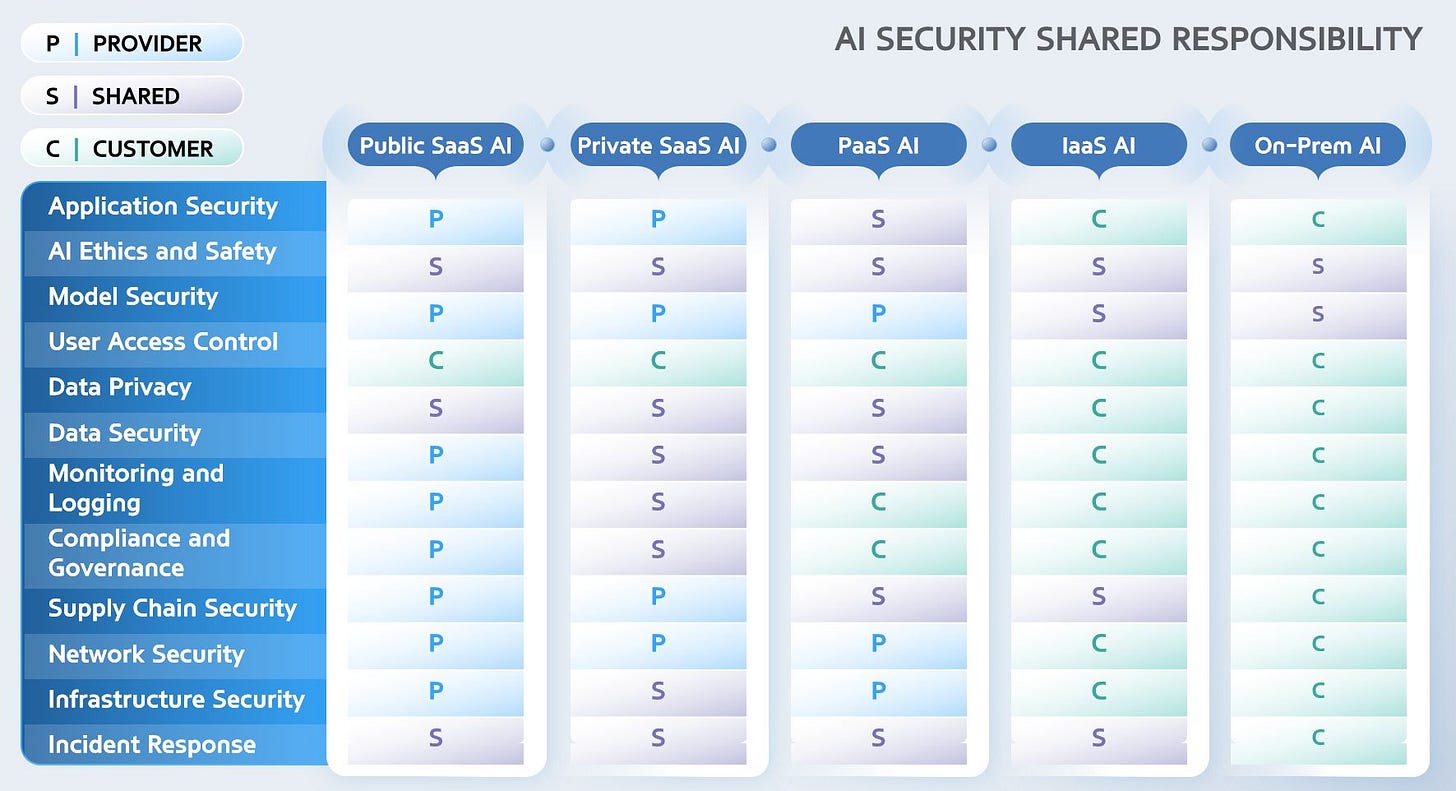

As organizations adopt AI across Public SaaS, Private SaaS, PaaS, IaaS, and On-Prem deployment models, the boundaries of security responsibility shift dramatically. Ignoring this can lead to misconfigured systems, data leakage, compliance violations, or unsafe AI behavior.

The framework below (from the attached diagram) clarifies the AI Shared Responsibility Model—a necessary blueprint for CIOs, CISOs, AI leaders, and engineering teams.

🔎 Understanding the Responsibility Split

Across the five deployment models, responsibilities shift between:

P – Provider (Cloud vendor / AI provider)

S – Shared (Both provider & customer)

C – Customer (Your organization)

This model helps clarify who owns what — especially as AI adoption accelerates.

📊 Key Responsibilities Across the AI Stack

1. Application Security

Public SaaS → Provider

IaaS / On-Prem → Customer

AI-enabled applications need secure coding, vulnerability management, and hardened APIs. As you move toward infrastructure control (IaaS, On-Prem), you own more of this security surface.

2. AI Ethics & Safety

Mostly Shared Responsibility

Ensuring fairness, explainability, safe outputs, hallucination control, and compliance with responsible AI policies requires joint effort between provider and customer.

3. Model Security

SaaS → Managed by Provider

PaaS/IaaS → Customer involvement increases

Model poisoning, prompt attacks, inversion attacks, and unauthorized access must be managed based on how much control your team has over the model lifecycle.

4. User Access Control

SaaS → Provider + Customer

On-Prem → Customer

Identity, MFA, RBAC, API keys, and fine-grained authorization decisions shift toward customer ownership as control increases.

5. Data Privacy & Data Security

PaaS → Shared

IaaS → Customer-controlled

Data governance, retention, anonymization, and privacy-by-design must follow regulatory standards (GDPR/DPDP/CCPA).

As the deployment moves closer to your infra, your accountability increases.

6. Monitoring, Logging & Observability

SaaS → Provider

IaaS / On-Prem → Customer

AI observability (model drift, data drift, security logs, anomalies) becomes a customer obligation in advanced deployment models.

7. Compliance & Governance

SaaS → Provider

PaaS / IaaS / On-Prem → Customer

Customers must ensure AI policies align with internal governance frameworks and new AI regulatory requirements (EU AI Act, NIST AI RMF, ISO/IEC 42001).

8. Supply Chain Security

Mostly Provider-owned except in IaaS/On-Prem

Customers must still validate SBOMs, model lineage, and third-party risks—especially when using open-source or fine-tuned models.

9. Network & Infrastructure Security

Provider → SaaS

Customer → IaaS/On-Prem

Firewalls, segmentation, private endpoints, VCN rules, edge security, and vulnerability patching become customer responsibilities as you move down the stack.

10. Incident Response

SaaS → Shared

IaaS & On-Prem → Customer

AI breach response requires joint action early on, but full accountability shifts to the customer once infrastructure and models are in-house.

🧩 Why This Model Matters Now

AI systems introduce new attack surfaces and require a rethinking of traditional cloud security. Organizations must understand:

✔️ Where your security responsibility ends and the provider’s begins

✔️ How responsibilities change across AI deployment models

✔️ Which teams own which controls (IT, Security, Data, AI, Legal)

✔️ How to build compliance-ready AI environments

Misalignment here is one of the largest hidden risks in enterprise AI programs.

🏁 Final Takeaway

AI security is no longer optional; it is strategic.

And securing AI requires shared responsibility, not siloed ownership.

Enterprises that understand this model will:

✨ Reduce risk

✨ Ensure regulatory compliance

✨ Build trustworthy AI pipelines

✨ Accelerate AI adoption safely and responsibly

Those that don’t are exposed to massive operational, ethical, and compliance risks.